Hi3516(海思)训练yolov5-6.0-->转oonx-->转caffe-转.wk文件

Hi3516(海思)训练yolov5-6.0—>转oonx—>转caffe-转.wk文件

1 训练

1 更改模型结构,将Upsample变成ConvTranspose2d,

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

# [-1, 1, nn.Upsample, [None, 2, 'nearest']],

[-1, -1, nn.ConvTranspose2d,[256, 256, 2, 2]],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

# [-1, 1, nn.Upsample, [None, 2, 'nearest']],

[-1, -1, nn.ConvTranspose2d,[128, 128, 2, 2]],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

2. 导出模型

1. 导出onnx模型:

(1) 在export中opset改为9

(2) 在models/yolo.py中修改detect中代码如下:

# 为海思3561dv300更改 train use 官方

class Detect(nn.Module):

stride = None # strides computed during build

onnx_dynamic = False # ONNX export parameter

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

super().__init__()

self.nc = nc # number of classes

self.no = nc + 5 # number of outputs per anchor

self.nl = len(anchors) # number of detection layers

self.na = len(anchors[0]) // 2 # number of anchors

self.grid = [torch.zeros(1)] * self.nl # init grid

self.anchor_grid = [torch.zeros(1)] * self.nl # init anchor grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch) # output conv

self.inplace = inplace # use in-place ops (e.g. slice assignment)

def forward(self, x):

# print_feature=2

z = [] # inference output

for i in range(self.nl):

x[i] = self.m[i](x[i]) # conv

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

# x[i] = self.m[i](x[i]) # **增加这行代码**

# x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

x[i] = x[i].view(bs, self.na, self.no, ny*nx)

if not self.training: # inference

if self.grid[i].shape[2:4] != x[i].shape[2:4] or self.onnx_dynamic:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

# y = x[i].sigmoid()

y = x[i]

# if self.inplace:

# y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

# y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

# else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

# xy = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

# wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

# y = torch.cat((xy, wh, y[..., 4:]), -1)

# z.append(y.view(bs, -1, self.no))

z.append(y)

return z

# return x if self.training else (torch.cat(z, 1), x)

def _make_grid(self, nx=20, ny=20, i=0):

d = self.anchors[i].device

yv, xv = torch.meshgrid([torch.arange(ny).to(d), torch.arange(nx).to(d)])

grid = torch.stack((xv, yv), 2).expand((1, self.na, ny, nx, 2)).float()

anchor_grid = (self.anchors[i].clone() * self.stride[i]) \

.view((1, self.na, 1, 1, 2)).expand((1, self.na, ny, nx, 2)).float()

return grid, anchor_grid

# 官方原先的DETAECT

class Detect1(nn.Module):

stride = None # strides computed during build

onnx_dynamic = False # ONNX export parameter

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

super().__init__()

self.nc = nc # number of classes

self.no = nc + 5 # number of outputs per anchor

self.nl = len(anchors) # number of detection layers

self.na = len(anchors[0]) // 2 # number of anchors

self.grid = [torch.zeros(1)] * self.nl # init grid

self.anchor_grid = [torch.zeros(1)] * self.nl # init anchor grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch) # output conv

self.inplace = inplace # use in-place ops (e.g. slice assignment)

def forward(self, x):

z = [] # inference output

for i in range(self.nl):

x[i] = self.m[i](x[i]) # conv

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

if not self.training: # inference

if self.grid[i].shape[2:4] != x[i].shape[2:4] or self.onnx_dynamic:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

y = x[i].sigmoid()

if self.inplace:

y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

xy = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, y[..., 4:]), -1)

z.append(y.view(bs, -1, self.no))

return x if self.training else (torch.cat(z, 1), x)

def _make_grid(self, nx=20, ny=20, i=0):

d = self.anchors[i].device

yv, xv = torch.meshgrid([torch.arange(ny).to(d), torch.arange(nx).to(d)])

grid = torch.stack((xv, yv), 2).expand((1, self.na, ny, nx, 2)).float()

anchor_grid = (self.anchors[i].clone() * self.stride[i]) \

.view((1, self.na, 1, 1, 2)).expand((1, self.na, ny, nx, 2)).float()

return grid, anchor_grid

改动有以下几点:

1:去掉了原先的permute;

2:将view原来的输出维度(bs, na, no, ny, nx) 改为 (bs, na, no, ny * nx);

3:去除了后处理坐标点和宽高decode代码,去除cat操作

现在来分析下为什么这么改:

1:nnie不支持5个维度的permute(即transpose),且只支持0231的方式,过于局限,我们不妨删掉这一层,在后处理中按照合适的读取方式去找结果就好了。

2:nnie的reshape也只支持4维,且第一维必须是0,为了能用nnie的reshape,我们不得不把x和y共享一个维度,这导致的结果是输出结果中,x和y在同一行,我们只需按个数取值即可。

3:后处理中,对三个检测层分别处理,所以不需要concat

执行

python export.py --opset 9 --imgsz 640 640 --simplify --weights best.pt

导出onnx-sim模型

python -m onnxsim xxx.onnx xxx-sim.onnx

3 转caffe

github上搜索 yolov5_onnx2caffe 项目(https://github.com/Wulingtian/yolov5_onnx2caffe)

vim convertCaffe.py

设置onnx_path(上面转换得到的onnx模型),prototxt_path(caffe的prototxt保存路径),caffemodel_path(caffe的caffemodel保存路径)

执行

python ./yolov5_onnx2caffe/convertCaffe.py

4 caffe转 wk文件

2

注意将.cfg文件中compile_mode = 0 改成compile_mode = 1

说明:

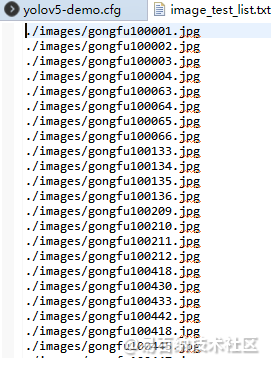

image_list 字段表示测试的数据,具体如下

点击运行

- 分享

- 举报

暂无数据

暂无数据-

浏览量:3740次2024-02-19 15:26:47

-

2024-01-22 16:01:53

-

浏览量:9378次2020-11-24 23:21:01

-

2023-01-04 15:09:58

-

浏览量:6260次2021-12-11 15:15:29

-

浏览量:6729次2022-03-03 09:00:09

-

浏览量:4692次2020-08-06 15:57:44

-

浏览量:7124次2020-08-26 14:15:06

-

浏览量:3388次2024-02-23 17:41:04

-

浏览量:6523次2023-10-13 17:55:36

-

浏览量:1525次2024-01-22 15:27:25

-

浏览量:3443次2024-02-18 16:38:33

-

浏览量:5239次2020-08-05 20:38:05

-

浏览量:6468次2024-05-22 15:23:49

-

浏览量:46380次2019-07-25 11:31:42

-

浏览量:2896次2024-01-06 10:33:06

-

浏览量:1546次2023-06-03 16:03:04

-

浏览量:5238次2017-10-30 11:12:34

-

浏览量:4290次2024-03-05 15:34:48

-

广告/SPAM

-

恶意灌水

-

违规内容

-

文不对题

-

重复发帖

shui

微信支付

微信支付举报类型

- 内容涉黄/赌/毒

- 内容侵权/抄袭

- 政治相关

- 涉嫌广告

- 侮辱谩骂

- 其他

详细说明

微信扫码分享

微信扫码分享 QQ好友

QQ好友